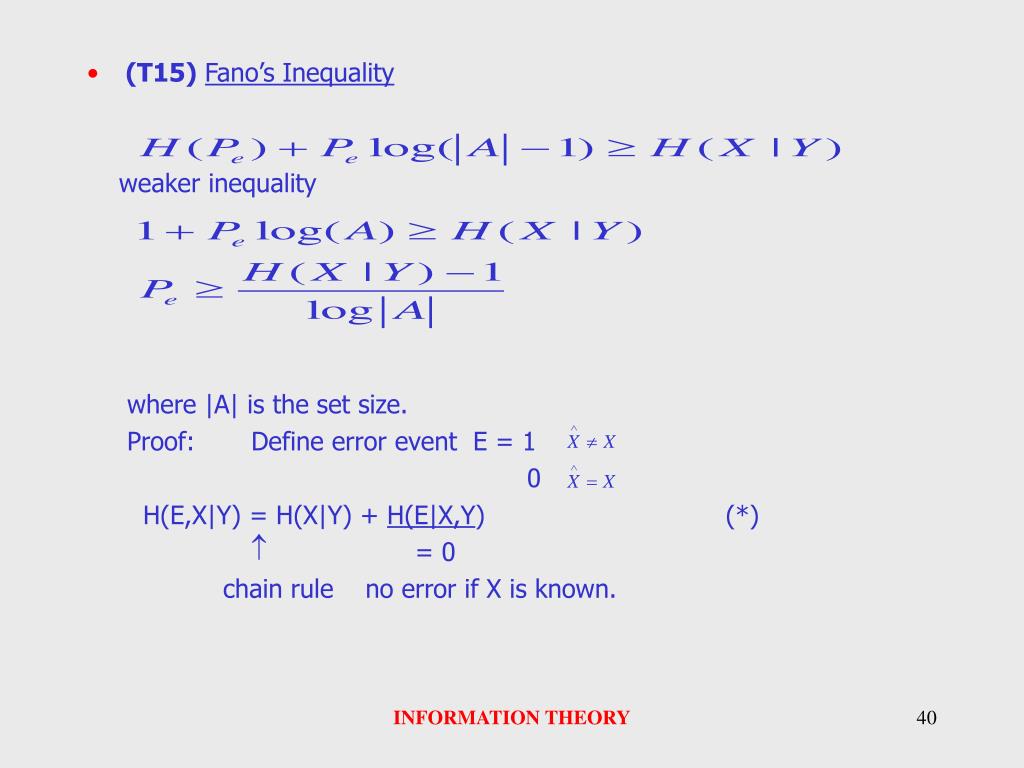

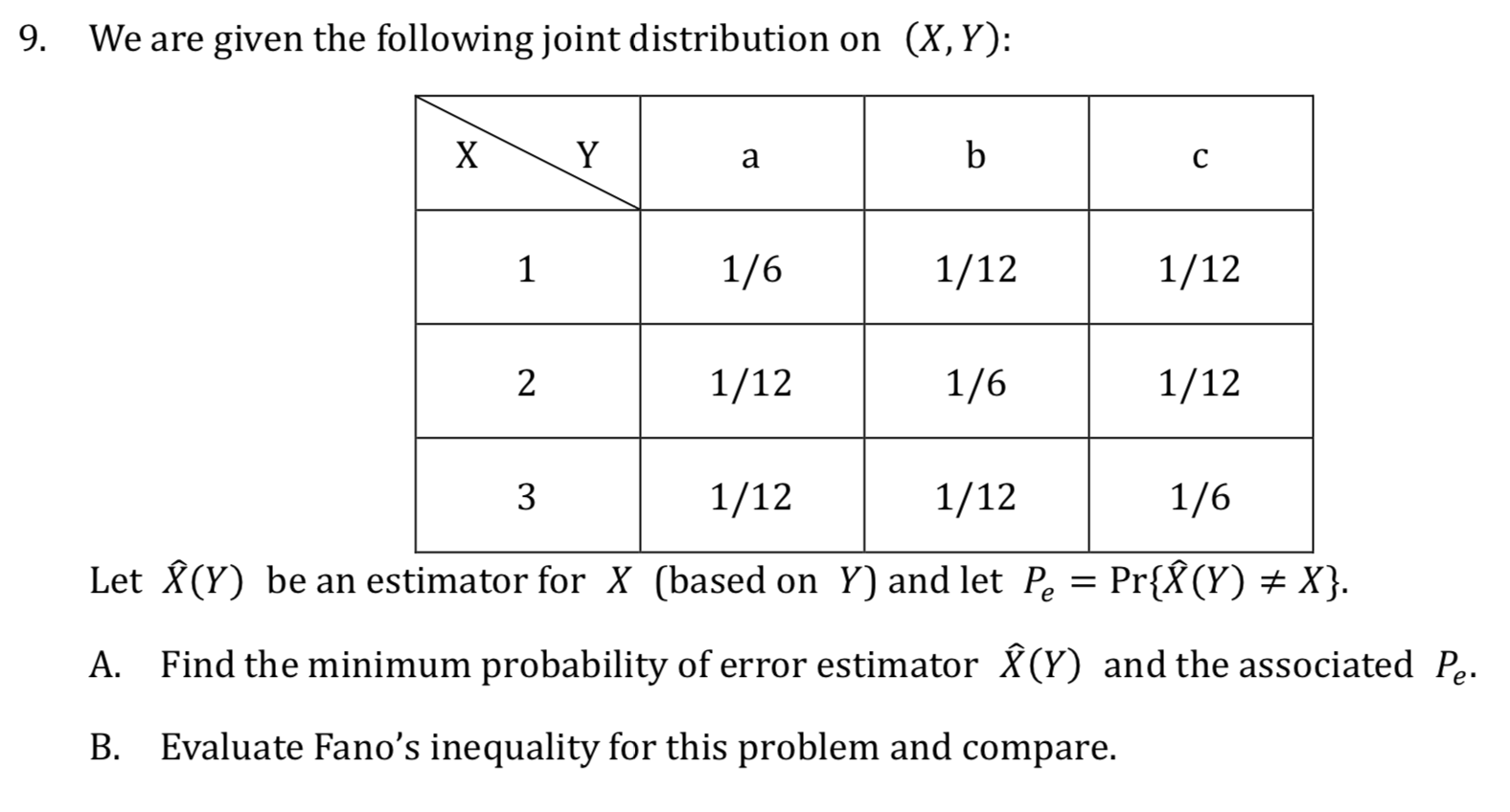

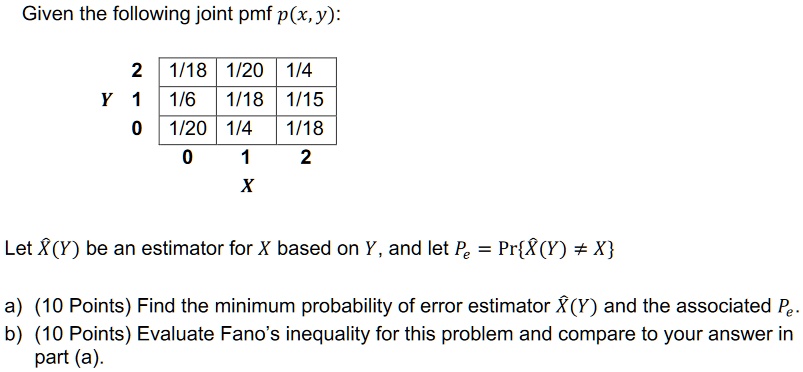

SOLVED:Given the following joint pmf p(x,y): 1/18 1/20 1/4 1/6 1/18 1/15 1/20 1/4 1/18 Let X(Y) be an estimator for X based on Y, and let P Pr{R(Y) # X} (10

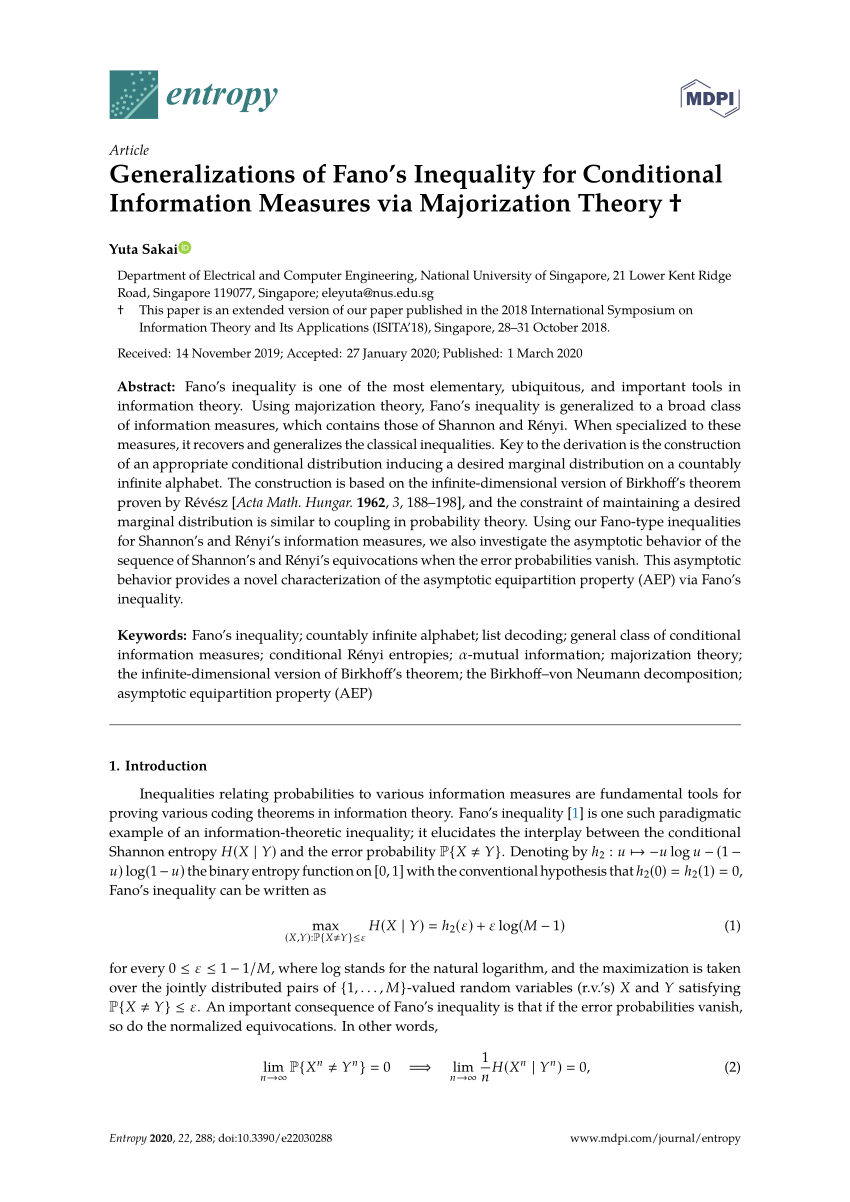

Entropy | Free Full-Text | Generalizations of Fano's Inequality for Conditional Information Measures via Majorization Theory | HTML

PDF) Generalizations of Fano's Inequality for Conditional Information Measures via Majorization Theory

![PDF] Generalizations of Fano's Inequality for Conditional Information Measures via Majorization Theory † | Semantic Scholar PDF] Generalizations of Fano's Inequality for Conditional Information Measures via Majorization Theory † | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/69d78200c0020c45f5f47729236f198a549ac77e/11-Figure2-1.png)

PDF] Generalizations of Fano's Inequality for Conditional Information Measures via Majorization Theory † | Semantic Scholar

![PDF] An Extension of Fano's Inequality for Characterizing Model Susceptibility to Membership Inference Attacks | Semantic Scholar PDF] An Extension of Fano's Inequality for Characterizing Model Susceptibility to Membership Inference Attacks | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/77678b84999b6c470abc7939e777107c2b84a531/7-Figure3-1.png)

PDF] An Extension of Fano's Inequality for Characterizing Model Susceptibility to Membership Inference Attacks | Semantic Scholar

Entropy | Free Full-Text | Generalizations of Fano's Inequality for Conditional Information Measures via Majorization Theory

Figure 1 from Beyond Fano's inequality: bounds on the optimal F-score, BER, and cost-sensitive risk and their implications | Semantic Scholar

Refinement of Two Fundamental Tools in Information Theory Raymond W. Yeung Institute of Network Coding The Chinese University of Hong Kong Joint work with. - ppt download

Jonathan Scarlett on Twitter: "Information theory puzzle: Can the standard Fano's inequality give a tight bound for this extremely simple adaptive problem? @BristOliver @mraginsky @BernhardGeiger @CindyRush @DenizGunduz1 @giuseppe_durisi https://t.co ...

Entropy | Free Full-Text | Generalizations of Fano's Inequality for Conditional Information Measures via Majorization Theory

12. Shannon's Information Measures : Mutual Information, Conditional Mutual Information, Chain Rules, Kullback Leibler Distance, Information Divergence, Fano's Inequality, Markov Chain, Data Processing Theorem